Modern organisations increasingly rely on machine learning to make decisions about credit, pricing, demand forecasting, customer churn, fraud detection, and hiring. These models can be accurate, but many are hard to explain. When stakeholders cannot understand why a model recommended an action, they hesitate to deploy it, regulators ask tough questions, and frontline teams lose trust. Interpretable AI closes this gap by translating complex model behaviour into clear, decision-ready insights.

For professionals sharpening these skills through a data scientist course in Chennai, interpretability is not just a “nice to have.” It is often the difference between a model that stays in a notebook and one that is adopted across the business.

Why interpretability mtecatters to business leaders

Non-technical leaders usually care about three things: risk, accountability, and outcomes.

-

Risk: If a model denies a loan or flags a transaction, leaders must know whether it is doing so fairly and consistently.

-

Accountability: When results are challenged—by customers, auditors, or internal teams—leaders need evidence that the decision is based on legitimate factors.

-

Outcomes: Explanations help teams act. If churn risk is driven by late deliveries, operations can fix delivery performance. If it is driven by plan pricing, pricing teams can test alternatives.

Interpretability turns predictions into practical levers. It also reduces internal friction because stakeholders see the “logic” behind model outputs.

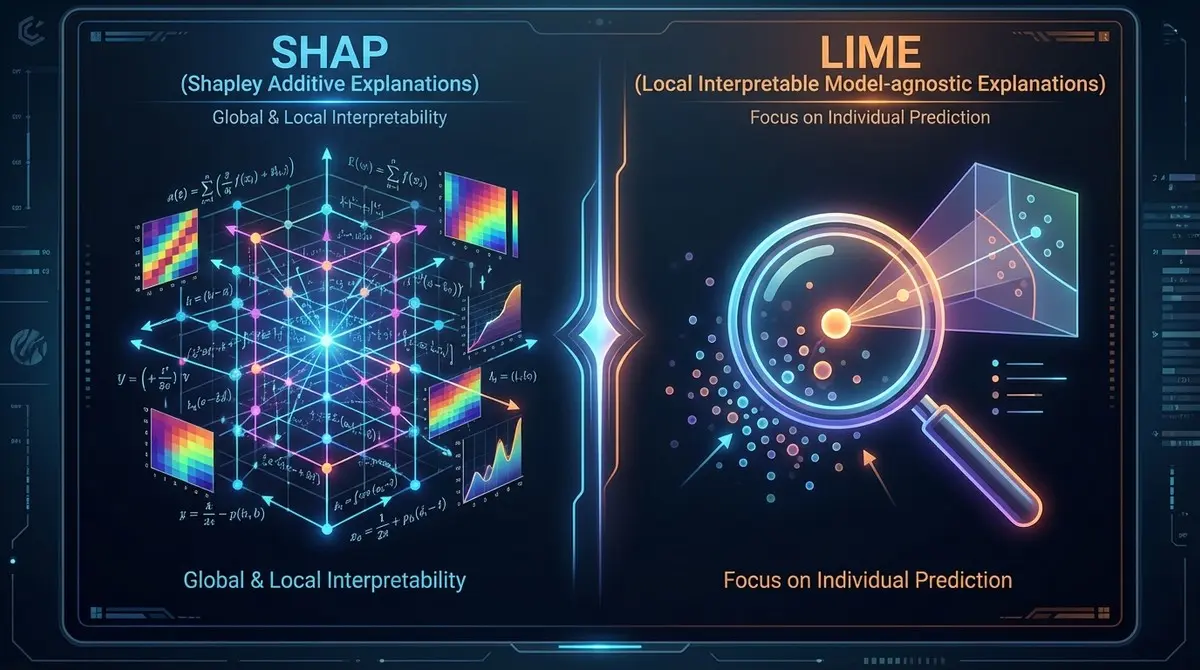

SHAP and LIME in plain terms

Two widely used approaches are SHAP and LIME. Both aim to answer: Which features influenced this prediction, and by how much?

SHAP: consistent, game-theory-based feature contributions

SHAP (SHapley Additive exPlanations) assigns each input feature a contribution value for a prediction. The key benefit is consistency: contributions are calculated in a principled way inspired by Shapley values from game theory. In business language, SHAP can show:

-

For a specific customer, the top reasons they are predicted to churn

-

Across the whole portfolio, which factors most frequently push risk up or down

-

Whether certain features dominate decisions (a red flag if they should not)

SHAP works well when you need both individual-level explanations (“Why this case?”) and global patterns (“What drives outcomes overall?”).

LIME: fast local explanations around a single case

LIME (Local Interpretable Model-agnostic Explanations) creates a simple, interpretable model around one prediction by perturbing inputs and observing changes in output. Think of it as: “Let’s approximate the model near this single customer or transaction and explain that local behaviour.”

LIME is useful when stakeholders need a quick explanation for a particular decision, especially for complex black-box models. It is “local-first,” meaning it shines in case-by-case explanations rather than enterprise-wide interpretability programmes.

A practical workflow that business teams can trust

Interpretability works best when it is treated as part of the delivery process, not a last-minute chart in a presentation.

-

Define the decision and acceptable rationale

Start by writing down what “reasonable” looks like. For credit risk, income stability and repayment history make sense; postcode alone may be problematic. This baseline helps you detect suspicious drivers early. -

Build the model and validate performance normally

Use standard evaluation (accuracy, AUC, precision/recall) plus segmented checks across key groups. Interpretability does not replace performance testing—it complements it. -

Run SHAP for global and local explanations

-

Global: identify the top drivers overall

-

Local: explain a specific prediction for a stakeholder review

-

-

Use LIME for targeted, stakeholder-led case reviews

LIME helps when leaders ask, “Show me why this customer was flagged.” It is especially effective in governance meetings or operational audits where the focus is on individual decisions. -

Convert explanations into action rules

The goal is not just “explain,” but “act.” If SHAP shows cancellations rise when delivery delays exceed a threshold, define operational triggers or service recovery steps.

In many data scientist course in Chennai capstone-style projects, this workflow is exactly what makes a solution feel production-ready to business users.

How to present explanations to non-technical leaders

Your charts are only as good as the story they tell. A simple structure works well:

-

One sentence: “The model predicts high churn risk for this customer.”

-

Top 3 drivers: “Main reasons: repeated late deliveries, drop in usage, unresolved support tickets.”

-

So what: “If we improve delivery SLAs and prioritise ticket resolution, risk reduces.”

-

Confidence and caveats: “This is a probabilistic estimate; we monitor drift weekly.”

Avoid technical terms like “Shapley values” in leadership meetings unless asked. Translate them into “contribution” or “impact” and keep the link to business levers.

Common pitfalls and how to avoid them

-

Treating explanations as truth: SHAP and LIME explain the model, not reality. If the training data is biased, explanations can be biased too.

-

Using unstable features: If a feature changes definition over time, explanations will become misleading.

-

Overloading leaders with charts: Pick one global view and one local example. Clarity beats volume.

-

Ignoring monitoring: Model drift changes drivers. Build a routine to re-check explanations after major business shifts.

This is where interpretability becomes a governance tool, not just a visualisation step—an emphasis often reinforced in a data scientist course in Chennai focused on real deployment scenarios.

Conclusion

Interpretable AI makes machine learning decisions transparent, defensible, and easier to act on. SHAP is strong for consistent, enterprise-wide insight into what drives model outcomes, while LIME is valuable for quick, local explanations in individual cases. When used within a structured workflow—clear decision framing, performance validation, explanation-led reviews, and ongoing monitoring—these methods build trust with non-technical business leaders and reduce the risk of deploying opaque models.